This App Helps People With Visual Impairments See

We like to consider ourselves color enthusiasts. From color blocking to graffiti to all things Pantone and everything (and we mean everything) in between, we can’t get enough. And thanks to some new technology, people with visual impairments can now experience colors and shapes through music.

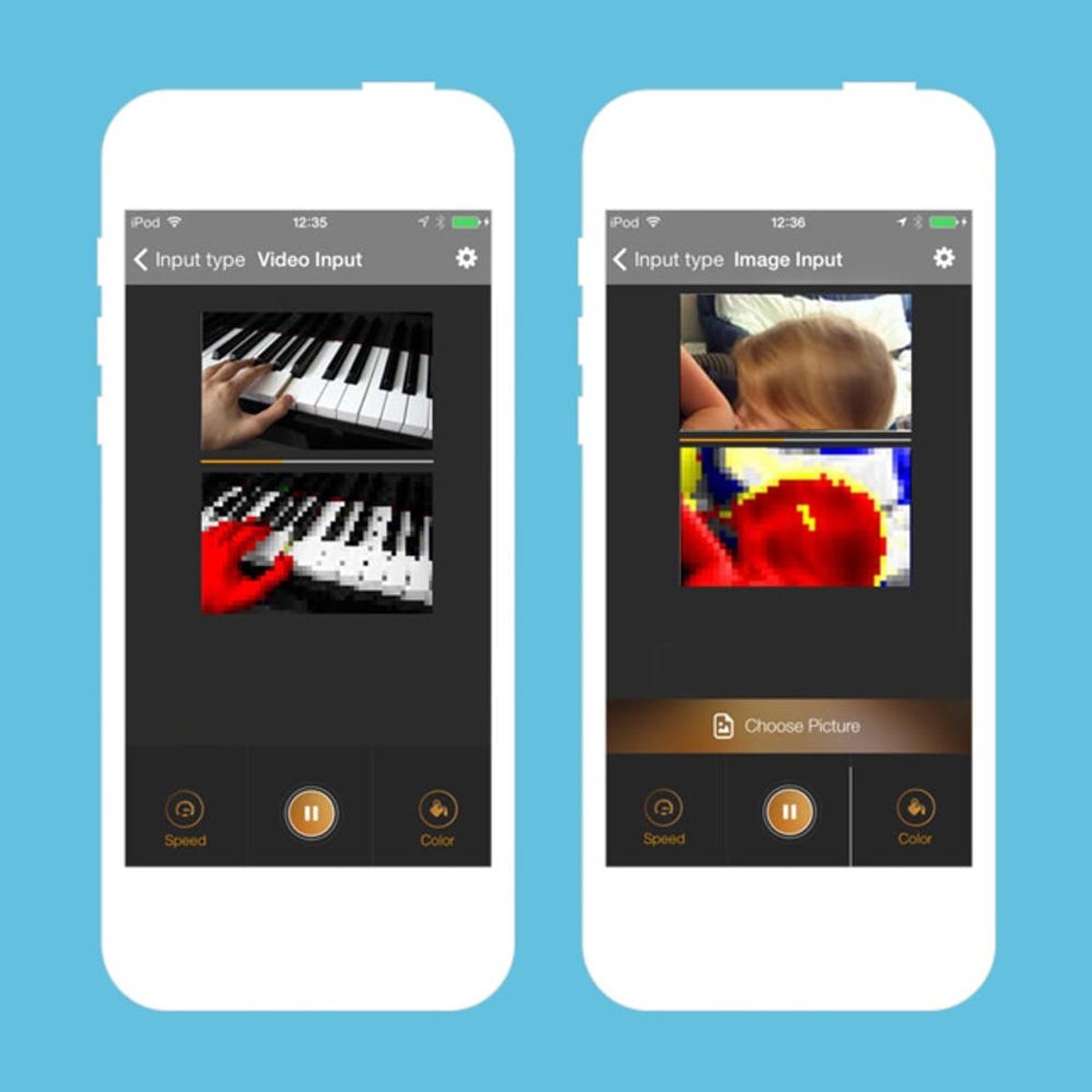

Translating images into sound isn’t anything new, but scientists are slowly gaining more information about how frequencies can be altered in order to create clearer pictures. The latest in this research has been done by neuroscientist Amir Amedi, who has produced a free iOS app called EyeMusic. The app’s software scans an image and reproduces it through sound. Each pixel has its own unique sound and pitch that ultimately reveals the image you scanned. The color and shape of an image or text depends on how high the pitch is, where the pixel is located and what instrument is used.

Back in 2012, Amedi explored The vOICe, an Android and Windows app that held the same concept as EyeMusic. It didn’t have color, but it was still an amazing feat considering it allowed users to read letters and observe their surroundings. The knowledge that pitch, location and instrumentation differences can allow the brain to process color on top of sight for anyone and everyone sounds like music to our eyes ;)

Do you think these software programs will be put into a wearable? What are you thoughts? We’d love to know in the comments below!

(h/tVox)